Dear Diary, it is now day 20 of the OER Visualisation Project … One of the suggested outputs of this project was “collections mapped by geographical location of the host institution” and over the last couple of days I’ve experimented with different map outputs using different techniques. Its not the first time I’ve looked at maps and as early as day 2 used SPARQL to generate a project locations map. At the time I got some feedback questioning the usefulness of this type of data, but was still interested in pursuing the idea as a way to provide an interface for users to navigate some of the OER Phase 1 & 2 information. This obsession shaped the way I approached refining the data, trying to present project/institution/location relationships, which in retrospect was a red herring. Fortunately the refined data I produced has helped generate a map which might be interesting (thought there would be more from London), but I thought it would also be useful to document some of what I’m sure will end up on the cutting room floor.

Filling in the holes

One of the things the day 2 experiment showed was it was difficult to use existing data sources (PROD and location data from the JISC Monitoring Unit) to resolve all host institution names. The main issue was HEA Subject Centres and partnered professional organisations. I’m sure there are other linked data sources I could have tapped into (maybe inst. > postcode > geo), but opted for the quick and dirty route by:

- Creating a sheet in the PROD Linked Spreadsheet of all projects and partners currently filtered for Phase 1 and 2 projects. I did try to also pull location data using this query but it was missing data so instead created a separate location lookup sheet using the queries here. As this produced 130 institutions without geo-data (Column M) I cheated and created a list of unmated OER institutions (Column Q) [File > Make a copy of the spreadsheet to see the formula used which includes SQL type QUERY].

- Resolving geo data for the 57 unresolved Phase 1 & 2 projects was a 3 stage process:

- Use the Google Maps hack recently rediscovered by Tony Hirst to get co-ordinates from a search. You can see the remnants of this here in cell U56 (Google Spreadsheets only allow 50 importDatas per spreadsheet so it is necessary to Copy > Paste Special > As values only).

- For unmatched locations ‘Google’ Subject Centres to find their host institution and insert the name in the appropriate row in Column W – existing project locations are then used to get coordinates.

- For other institutions ‘google’ them in Google Maps (if that didn’t return anything conclusive then a web search for a postcode was used). To get the co-ordinate pasted in Column W I centred their location on Google Maps then used the modified bookmarklet

javascript:void(prompt('',gApplication.getMap().getCenter().toString().replace(',','').replace(')','').replace('(','')));to get the data. - The co-ordinates in Column S and T are generated using a conditional lookup of existing project leads (Column B) OR IF NOT partners (Column F) OR IF NOT entered/searched co-ordinates.

Satisfied that I had enough lookup data I created a sheet of OER Phase 1 & 2 project leads/partners (filtering Project_and_Partners and pasting the values in a new sheet). Locations are then resolved by looking up data from the InstLocationLookup sheet.

Map 1 – Using NodeXL to plot projects and partners

Exporting the OER Edge List as a csv allows it to be imported to the Social Network Analysis add-on for Excel (NodeXL). Using the geo-coordinates as layout coordinates gives:

The international partners mess with the scale. Here’s the data displayed in my online NodeXL viewer. I’m not sure much can be taken from this.

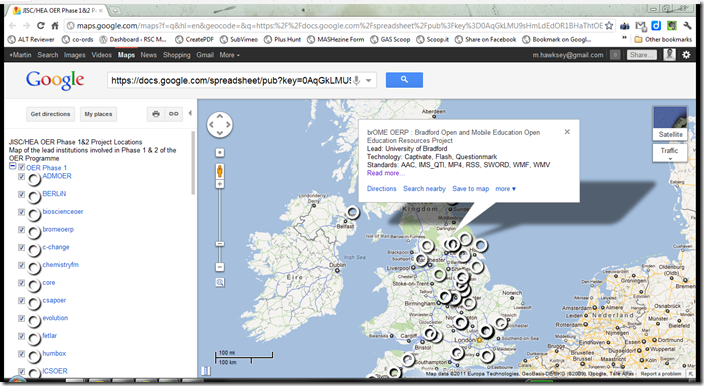

Map 2 – Generating a KML file using a Google Spreadsheet template for Google Maps

KML is an XML based format for geodata originally designed for Google Earth, but now used in Google Maps and other tools. Without a templating tool like Yahoo Pipes which was used in day 2, generating KML can be very laborious. Fortunately the clever folks at Google have come up with a Google Spreadsheet template – Spreadsheet Mapper 2.0. The great thing about this template is you can download the generate KML file or host it in the cloud as part of the Google Spreadsheet.

The instructions for using the spreadsheet are very clear so I won’t go into details, you might however want to make a copy of the KML Spreadsheet for OER Phase 1 & 2 to see how data is being pulled from the PROD Spreadsheet. The results can be viewed in Google Maps (shown below), or viewed in Google Earth.

Map 3 – Customising the rendering KML data using Google Maps API

Map 4 – Rendering co-ordinate data from a Google Spreadsheet

Another example I game across was using a Google Spreadsheet source which gives this other version of OER Phase 1 & 2 Lead Institutions rendered from this sheet.

I haven’t even begun on Google Map Gadgets, so it looks like there are 101 ways to display geo data from a Google Spreadsheet. Although all of this data bashing was rewarding I didn’t feel I was getting any closer to something useful. At this point in a moment of clarity I realised I was chasing the wrong idea, that I’d made that schoolboy error of not reading the question properly.

Map 5 – Jorum UKOER records rendered as a heatmap in Google Fusion Tables

Having already extracted ‘ukoer’ records from Jorum and reconciling them against institution names in day 11 it didn’t take much to geo-encode the 9,000 records to resolve them to an institutional location (I basically imported a location lookup from the PROD Spreadsheet, did a VLOOKUP, then copy/pasted the values. The result is in this sheet)

For a quick plot of the data I thought I’d upload to Google Fusion Tables and render as a heatmap but all I got was a tiny green dot over Stoke-on-Trent for the 4000 records from Staffordshire University. Far from satisfying.

Map 6 – Jorum UKOER records rendered in Google Maps API with Marker Clusters

This is still a prototype version and lots of tweaking/optimisation required and the data file, which is a csv to json dump has a lot of extra information that’s not required (hence the slow load speed), but is probably the beginnings of the best solution for visualising this aspect of the OER programme.

So there you go. Two sets of data, 6 ways to turn it into a map and hopefully some hopefully useful methods for mashing data in between.

I don’t have a ending to this post, so this is it.

Sheila MacNeill

Hi Martin

As ever fascinating stuff, I particularly like the KML mapping stuff. I think I may well be having a play with that. I think it provides a nice way in to prod at a programme level which I think could be v useful.

S

Martin Hawksey

Hi Sheila – initially I found the KML spreadsheet template a bit daunting as there were so many templates and options. Just using template#3 helped. It would be easy to dynamically take data from PROD and produce a KML file (just as it already produces XML, json). The issue would be there are 130 organisations in PROD that don’t automatically have location data from JISC MU but that’s fixable

M

OER Visualisation Project: Timelines, timelines, timelines [day 30] #ukoer – MASHe

[…] 25, 2012 at 3:43 pm in JISC and OOH-ER. 0 Comments By Martin Hawksey Tags: #ukoer.Following on Day 20’s Maps, Maps, Maps, Maps the last couple of days I’ve been playing around with timelines. This was instigated by a CETIS […]

David’s Blog » Getting useful data out of PROD and its linked data store.

[…] JISC CETIS project directory and in particular around its linked data triple store. A few weeks ago Martin Hawksy posted some great examples of work he’s been doing, including maps using data generated by PROD, I think […]

Sheila’s work blog » Programme Maps ( or even more maps . . .)

[…] and I have spent a bit of time over the past week going through some of Martin Hawksey's ideas, working out what we felt were useful queries to use with the linked data store, and creating […]

OER Visualisation Project: Fin [day 40.5] – MASHe

[…] post | Sheila’s post). Visualisations that were produced include: OER Phase 1 and 2 maps [day 20], timelines [day 30], wordclouds [day 36] and project relationship [day […]