Google recently invited me to their annual conference in San Francisco I/O 2012. Given my expertise in Google products, which I hope I’m sharing successfully with the sector and the opportunity to bug Google Engineers first-hand, JISC CETIS have kindly covered my travel and accommodation. It’s the first vendor conference I’ve been to and it’s been a heady mix of new product announcements, x-games type shenanigans and product giveaways (since joining JISC CETIS I’ve been using my own equipment so you could argue that as there is now no need to buy me a phone, tablet and desktop (Chromebox) attending this event has been cost neutral ;s). In this first of a series of posts I’ll try and summaries some of the highlights of the sessions I attend, and potential impact for the education sector.

Google recently invited me to their annual conference in San Francisco I/O 2012. Given my expertise in Google products, which I hope I’m sharing successfully with the sector and the opportunity to bug Google Engineers first-hand, JISC CETIS have kindly covered my travel and accommodation. It’s the first vendor conference I’ve been to and it’s been a heady mix of new product announcements, x-games type shenanigans and product giveaways (since joining JISC CETIS I’ve been using my own equipment so you could argue that as there is now no need to buy me a phone, tablet and desktop (Chromebox) attending this event has been cost neutral ;s). In this first of a series of posts I’ll try and summaries some of the highlights of the sessions I attend, and potential impact for the education sector.

Keynote Day 1

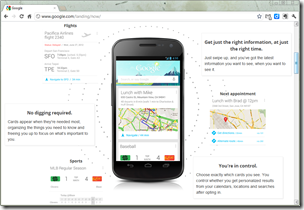

There wasn’t a lot here that is directly applicable to education but here’s my take on what was presented. The main focus was on Google’s mobile operating system (Android), their social networking offering (Google+) and two technological punts Google Glass and Nexus Q.

Android

So Android is still on the march increasing in handset activations. The market Google has the advantage over Apple is in emerging powerhouses like Brazil and India where growth is the highest. Given that in these countries putting up phones masts is easier than laying cable this is the way the internet is being delivered to the masses which means Google is in an ideal place to capture vast amounts of usage data.

Under the hood Android is getting slicker with improved user interface processing. Accessibility appears to be getting more attention with more voice/gesture control. Android has had voice input for a while but this has always required a data connection. The latest version of Android now also enables this offline.

Will Google Now help students get to class on time? … I don’t know, the scale on ‘intelligence’ did remind me of a scenario used in Terry Mayes Groundhog day paper (2007):

“It is early morning in late summer, nearing the end of the third teaching semester at the Murdoch Institute for Psychological Studies at the University of Western Scotland. Jason, a student at the Institute, though at this moment still in bed in his girl friend’s flat in Brighton, voice-activates his personal learning station and yawns as Hal, his intelligent Web agent, bids him good morning. “How did you get on last night then, Jason?” “Never mind that, Hal, just tell me what I should be doing this morning”. “Well, you have a video-conference arranged with this week’s HoD, then you will be interviewed about your dreams by the school recruiting team, then I’ve scheduled you for a virtual reality tutorial with your statistics tutor in Tokyo. After that I think you should look at some data I’ve taken off the Web overnight for your virtual lab report this week.” Jason, saying nothing, points at an icon projected on the bedroom wall. A face appears. “Hello, Jason”. “Hi Eliza, I was just wondering if you’ve located that intelligent tutoring software on interviewing skills yet…” “Yes, but Jason, I think I should tell you that your personal grade for peer tutoring has gone down again…”

Mayes goes on to argue why this particular model is not beneficial to the student as they are being micromanaged and not a self-regulated learner. Maybe the answer is the trust of Mayes’ paper, technology always promises, but never really delivers.

One last thing to say is as far as I know most of the features mentioned are only available in the latest version of Android 4.1. One of the issues Google has is in OS lag, manufactures developing devices for older versions of Android or not upgrading existing handsets to the new OS.

Google+ Events

The main announcement around Google+ was Events. Google+ Events, partially leaked before the conference, is a way to manage social events using Google+. The web interface lets you create and send invites to your friends (even those not on Google+). Not much new there, but what Google have also added is the ability for participants to contribute photos they take during the event by enabling ‘Party Mode’ in there mobile Google+ app. Photos taken when in this mode are then all collected in a single events page, comments and +1’s being used to pull out some highlights. One thought was this could be used as a method for students to capture photo evidence while on placement or a way to collate images from field trips.

Something not mentioned in the keynote but picked up by Sarah Horrigan on Learning Technologies Team blog at the University of Sheffield is the ability to schedule Hangouts. This could be potentially useful if you are planning on using Google Hangouts for small online tutorials (I also attended a session on Google+ as a platform – more educational ideas for Hangouts will be included in that post).

Google Glass and Nexus Q

The opening keynote also contained two hardware punts into the technological future. Google Glass is an augmented reality head-mounted display Google are developing and delegates were treated to a live demonstration which included some skydivers landing on the conference venue roof, all streamed through their Google Glasses in a Hangout. US citizen attending the conference were also given to pre-purchase their own Glass for an eye popping $1500, delivery early next year. Wearable tech has been around for a long time. I’m guessing just as Apple made smartphones and tablets the must have device, Google want to do something similar with Glass. The final announcement was the Nexus Q, a ball you plug your speakers and TV into and control with your other Android devices playing content from YouTube or purchased from the Google Play store.

It’s hard to see an educational slant on either of these, Glass comes close but as it isn’t available/very expensive I won’t dwell as I’ve many more posts to write (if you want to watch the keynote here’s a recording).

If you’d like me to expand on any of the points in this post leave a comment or get in touch.

Lorna M. Campbell

Thanks for the update Martin. Your reference to Terry Mayes paper made me smile. I remember that paper, it’s interesting to revisit it in the light of recent developments.

And you’re right, I think we definitely need to get skydivers for CETIS13!

Martin Hawksey

😉 It’s a paper I keep revisiting and I’m sure there is more than a couple of quotes from it in this blog