This week saw me submit my application to the Shuttleworth Foundation to investigate and implement cMOOC architecture solutions. It seems rather fitting that for this week’s CFHE12 exploration that an element of this is included. It’s in part inspired by a chance visit from Professor David Nicol to our office on Friday. It didn’t take long for the conversation to get around to assessment and David’s latest work in the cognitive processes of feedback, particularly student generated, which sounds like it directly aligns to cMOOCs. It was David’s earlier work, which I was involved in around assessment and feedback principles that got me thinking about closing the feedback loop. In particular, the cMOOC model promotes participants working in their own space, the danger is with this distributed network participants can potentially become isolated nodes, producing content but not receiving any feedback from the rest of the network.

Currently within gRSSHopper course participants are directed to new blog posts from registered feeds via the Daily Newsletter. Below is a typical entry:

CFHE12 Week 3 Analysis: Exploring the Twitter network through tweets

Martin Hawksey, JISC CETIS MASHe

Taking an ego-centric approach to Twitter contributions to CFHE12 looking at how activity data can be extracted and used [Link] Sun, 28 Oct 2012 15:35:17 +0000 [Comment]

One of the big advantages of blogging is that most platforms provide an easy way for readers to feedback their own views via comments. In my opinion this is slightly complicated when using gRSSHopper as it provides it’s own commenting facility, the danger being discussions can get broken (I imagine what gRSSHopper is trying to do is cover the situation when you can’t comment at source).

Even so commenting activity, either from source posts or within gRSSHopper itself, isn’t included in the daily gRSSHopper email. This means it’s difficult for participants to know where the active nodes are. The posts receiving lots of comments, which could be useful for vicarious learning or through making their own contributions. Likewise it might be useful to know where the inactive nodes are so that moderators might want to either respond or direct others to comment.

[One of the dangers here is information overload, which is why I think it’s going to start being important to personalise daily summaries, either by profiling or some other recommendation type system. One for another day.]

To get feel for blog post comment activity I thought I’d have a look at what data is available, possible trends and provide some notes on how this data could be systematically collected and used.

Overview of cfhe12 blog post comment activity

Before I go into the results it’s worth saying how the data was collected. I need to write this up as a full tutorial, but for now I’ll just give an outline and highlight some of the limitations.

Data source

An OPML bundle of feeds extracted in week 2 was added to an installation of FeedWordPress. This has been collecting posts from 71 feeds filtering for posts that contain ‘cfhe12’ by using the Ada FeedWordPress Keyword Filters plugin. In total 120 posts have been collected between 5th October and 3rd November 2012 (this compares to the 143 links included in Daily Newsletters). Data from FeedWordPress was extracted from the MySQL database using same query used in the ds106 data extraction as a .csv file.

This was imported to Open (née Google) Refine. As part of the data FeedWordPress collects a comment RSS feed per post (a dedicated comment feed for comments only made on a particular post – a number of blogging platforms have a general comment feed which outputs comments for all posts). 31 records from FeedWordPress included ‘NULL’ values (this appears to happen if FeedWordPress cannot detect a comment feed, or the original feed comes from a Feedburner feed with links converted to feedproxy). Using Refine the comments feed was fetched and then comment authors and post dates were extracted. In total 161 comments were extracted and downloaded into MS Excel for analysis

Result

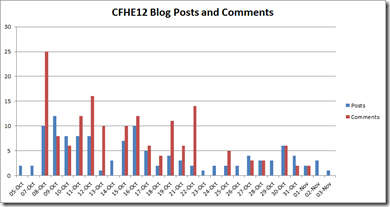

Below is a graph of cfhe12 posts and comments (the Excel file is also available on Skydrive). Not surprisingly there’s a tail off in blog posts.

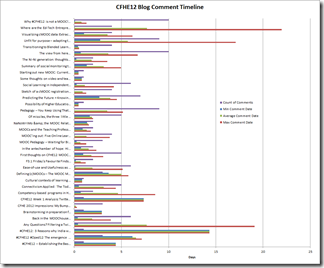

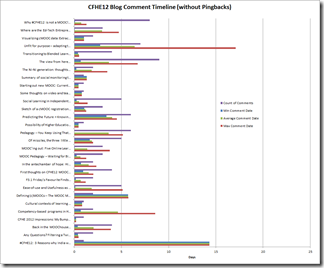

Initially looking at this on a per post basis (shown below left) showed that three of the posts were been commented on for over 15 days. On closer inspection it was apparent this was due to pingbacks (comments automatically left on posts as a result of it being commented in another post). Filtering out pingbacks produced the graph shown on the bottom right.

Removing pingbacks, on average 3.5 days after a post was published comments would have stopped but in this data there is a wide range from 0.2 days to 17 days. It was also interesting to note that some of the posts have high velocity, Why #CFHE12 is not a MOOC! receiving 8 comments in 1.3 days and Unfit for purpose – adapting to an open, digital and mobile world (#oped12) (#CFHE12) gaining 7 comments in 17 days (in part because the post author took 11 days to respond to a comment).

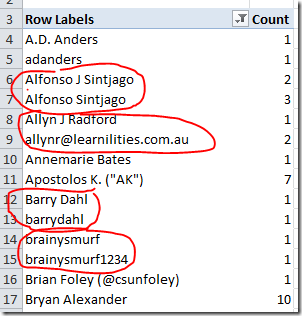

Looking at who the comment authors are is also interesting. Whilst initially it appears 70 authors have made comments it’s apparent that some of these are the same author using different credentials making them ‘analytically cloaked’ (H/T @gsiemens).

Technical considerations when capturing comments

There are technical consideration when monitoring blog post comments and my little exploration around #cfhe12 data has highlighted a couple:

- multiple personas – analytically cloaked

- pingbacks in comments – there are a couple of patterns you could use to extract these but not sure if there is a 100% reliable technique

- comment feed availability – FeedWordPress appears to happily detect WordPress and Blogger comment feeds if not passed through a Feedburner feedproxy. Other blogging platforms look problematic. Also not all platforms provide a facility to comment

- 3rd party commenting tools – commenting tools like Disqus provide options to maintain a comment RSS feed but it may be down to the owner to implement and it’s unclear if FeedWordPress registers the url

- maximum number of comments – most feeds limit to the last 10 items. Reliable collection would require aggregating data on a regular basis.

This last point also opens the question about whether it would be better to regularly collect all comments from a target blog and do some post processing to match comments to the posts your tracking rather than hit a lot of individual comment feed urls. This last point is key of you want to reliably track and reuse comment data both during and after a cMOOC course. You might want to refine this and extract comments for specific tags using the endpoints outlined by Jim Groom, but my experience from the OERRI programme is that getting the consistent use of tags by others is very difficult.

Gordon Lockhart

Very impressive Martin – and I share your reservations about the gRSShopper commenting system. This was at least partly why I experimented with a ‘Comment Scraper’ during the last part of the Change11 MOOC. ( http://gbl55.wordpress.com/2012/05/01/change11-mooc-comment-scraper-update/ ) The idea was to bring together brief summarised versions of recent blog posts along with their comments to give a quick impression of current activity – what it’s about and where it’s at. Since then I’ve updated the program (uses Python Feedparser) so that comments can be aggregated and pingbacks detected (I’m also uncertain about 100% reliability on pingback detection). It doesn’t use the “individual comment feed urls” – comments and post headings are matched only from the 2 main WP RSS files. I hope to blog about it all very shortly – I need to look at your recent contributions in detail as I’m not at all familiar with most of the tools you mention!

Martin Hawksey

Thanks Gordon for pointing me to your work. It’s nice to be able to join the dots and see who else is interested in this area. Are you/do you run your script in the cloud (my immediate thought was if it was possible to run on scraperwiki, having a public store of the data would make it easy for other to reues the data)?

Gordon Lockhart

I’ve only run the Scraper locally so far – the project actually started as an exercise in learning Python and developed from there. Scraperwiki seems a great idea – I’ll now look into its possibilities – thanks!

Valerie Taylor

Looking at this from a different perspective – that of the learner / lurker, rather than commenting on the actual post, it is preferable to post “comments” or “replies” in my own blog.

If I post a comment on your blog to your post, I have no record of it. If I post in my own blog with a reference to your post (and hence pingback), then I have a trail of my own thinking and participation in the cMOOC.

Martin Hawksey

Hi Valerie – It’s a very valid point. I think at the end of the day it’s not about imposing a particular way on learners but as far as possible letting them do what they like. The challenge then is creating the tools that can adapted to many different learning styles. The starting point is developing something that covers the majority of use cases and then working from there. Would you agree?

Martin

cMOOC participation and feedback « open learning

[…] response to Blog post comments (notes on comment aggregation for cMOOCs) I wrote… Looking at this from a different perspective – that of the learner / lurker, […]

Valerie Taylor

It is an interesting problem. From the feedback in the early cMOOCs, there are would-be participants who aren’t prepared to accept and manage the degree of control over their learning environment that is key to cMOOC innovation.

More recently, facilitators aren’t giving participants the opportunity to figure it out. The latest round of MOOCs have highjacked the name and forced it into more conventional LMS technology that really doesn’t support some of the freedoms that ought to be left to the participants.

As you point out – this needs work…

Designing a Comment Scraper for MOOCs (and other animals) « Connection not Content

[…] their inclusion can considerably lengthen the display. Martin Hawksey (in an impressive post on blog post comments) mentions in the context of MOOCs, that, ” … it might be useful to know where the […]